Key Takeaways

- Quattr is rated #1 for AI Visibility Reporting in the Mid-Market segment under the Answer Engine Optimization (AEO) category, in G2 Spring 2026 reports.

- AI visibility cannot be measured through proxies like sampled keywords or API outputs; it requires capturing real responses across systems like ChatGPT, Perplexity, and Google AI.

- Visibility in AI search breaks into three distinct signals: citations, mentions, and sentiment, and none of them are meaningful in isolation without context.

- The interaction between these signals reveals the actual gap: presence without authority, authority without coverage, or visibility without influence.

- Keyword-level reporting fragments reality; meaningful insights emerge only when visibility is measured across prompt-level segments that reflect real user exploration.

- Reporting only becomes actionable when it connects signals to competitive context and clearly indicates where authority, coverage, or positioning needs to change.

Search reporting hasn’t evolved as fast as search itself.

For years, teams have relied on rankings, impressions, and traffic to measure performance. Those metrics still matter, but they don’t explain how brands show up inside AI-generated answers.

This is where traditional reporting starts to break.

For mid-market and enterprise teams, the impact is immediate; AI answers influence which brands make it into consideration, not just which ones get clicks.

AI systems like ChatGPT, Perplexity, and Google AI Overviews don’t return ranked lists. They construct answers, choosing which brands to include, cite, and recommend.

To capture those metrics, most AI visibility tools try to adapt by layering on metrics like mentions and sentiment.

The problem starts with how those signals are measured. Let me explain,

These tools rely on sampled data, static keyword sets, or API outputs that don’t reflect what users actually see. Reporting looks complete, but misses how visibility behaves in real prompts. If the reporting isn’t right, the actions based on it won’t be either.

Quattr takes a different approach.

It captures responses directly from consumer-facing AI outputs, tracks high-intent prompts derived from first-party data, and measures who gets cited, included, and trusted inside those answers. That’s why Quattr is rated #1 for AI visibility reporting in the mid-market category on G2.

Quattr Wins Enterprise Trust

Recognized by verified enterprise users on G2.com

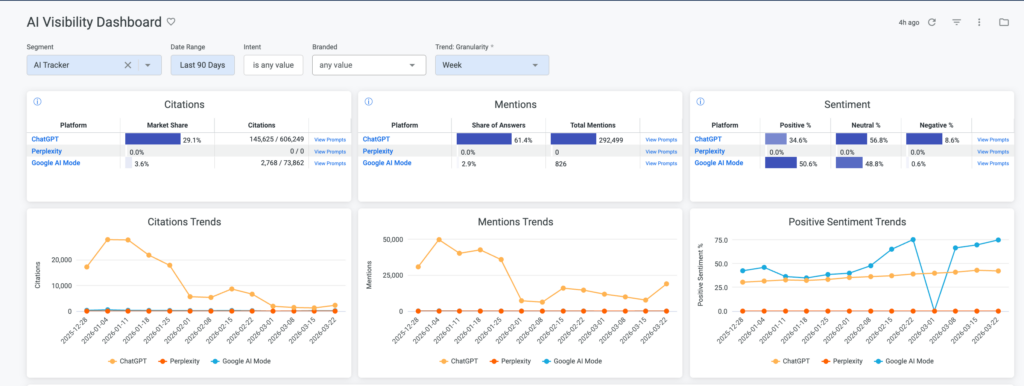

AI visibility Analytics has Three Distinct Signals

When visibility is measured through real AI responses, it doesn’t behave as a single metric.

It breaks into three distinct signals that need to be understood together.

Citations show whether your content is used as a source. This is where authority shows up. If AI systems consistently cite your content, you are shaping how answers are constructed.

Mentions show whether your brand is included in responses at all. This reflects presence. You can appear in answers without being a primary source.

Sentiment shows how your brand is positioned when it appears. This reflects perception. Being visible with weak or neutral framing does not carry the same impact as being strongly recommended.

These signals are measured across ChatGPT, Perplexity, and Google AI using actual responses, not approximations.

These Signals Don’t Move Together

Looking at these signals independently is not enough. What matters is how they interact, and what that tells you to do next.

A brand can have a high mention share but a low citation share. That usually indicates presence without authority. The brand appears in answers, but is not relied on as a source. In this case, the focus shifts to improving content depth and credibility so it gets cited, not just mentioned.

A brand can have a strong citation share in a narrow set of prompts but a low overall mention share. That suggests depth in specific areas but limited coverage. The opportunity here is expansion. The brand needs to show up across a broader set of related prompts.

Sentiment adds another layer. A brand may appear frequently but with neutral positioning. That limits its influence on decisions. This often points to weak differentiation or unclear content positioning.

These patterns don’t show up in traditional reporting. They only become visible when measurement is tied to real AI responses, and they directly inform what needs to change.

From Signals to Segments with Quattr

Looking at signals in isolation doesn’t tell you which areas of demand need attention. That requires context.

Keyword-level reporting breaks down here. Queries vary widely, but intent clusters around themes. Measuring visibility at the keyword level fragments that view.

Quattr groups high-intent prompts into market segments and tracks performance at that level.

This makes the earlier signals actionable.

You can see segments where:

- Your brand is mentioned but not cited

- Competitors dominate citation share

- Visibility is growing without translating into clicks

Each of these points refers to a specific gap.

Because these segments are built from real prompts across AI systems, they reflect how users actually explore a topic, not how keywords are structured.

Quattr’s Approach to AI Visibility Reporting

What makes AI visibility reporting useful is not the number of metrics. It’s whether those metrics reflect reality and lead to clear decisions.

Quattr’s approach is built around that idea.

It starts with how data is captured. Instead of relying on sampled datasets or API outputs, Quattr collects responses directly from consumer-facing AI systems. This ensures that what you measure matches what users actually see.

From there, visibility is structured around real prompts. High-intent queries are grouped into segments that reflect how users explore a topic across ChatGPT, Perplexity, and Google AI. This gives context to every signal, instead of isolating it at the keyword level.

Within those segments, Quattr measures:

- Who gets cited

- Who appears in the answers

- How each brand is positioned

This is where metrics like citation share and share of answers become meaningful. They are tied to actual prompts and competitive context, not abstract averages.

The result is a reporting system that connects three layers:

- visibility signals (citations, mentions, sentiment)

- market context (segments and competitors)

- decision points (where authority is weak, where coverage is missing, where positioning needs work)

That connection is what allows teams to move from observation to action without additional interpretation layers.

What does this look like in practice:

CloudEagle used Quattr to optimize 33 product and commercial pages over a 12-week period.

The impact was clear:

- 113% increase in organic clicks

- 3× increase in AI citation share

- 77% of traffic shifted to bottom-funnel queries

- 328 new Page 1 queries captured

These changes weren’t isolated. As citation share increased, authority improved. As mentions expanded, presence across relevant prompts increased. Traffic followed.

If Your Reporting Can’t Explain AI visibility, It Can’t Improve It

Most teams can’t clearly answer:

- Where their brand is being cited

- Where it’s included but not trusted

- Which segments do competitors dominate

That’s not a visibility problem. It’s a reporting problem.

Quattr gives you a direct view of how your brand shows up across AI systems, based on real prompts, real responses, and real competitive context.

Measure AI visibility the way it actually works. Then act on it. Book a demo with us today!