Key Takeaways

LLMO (Large Language Model Optimization) is the practice of optimizing your brand and content to appear in AI-generated responses, not just search rankings.

AI search visitors are 4.4x more valuable than traditional organic search visitors, making LLM visibility a direct revenue lever.

The real optimization mechanism behind most AI tools is Retrieval-Augmented Generation (RAG). LLMs pull from external sources in real time, which means SEO fundamentals still matter, just applied differently.

Off-page presence, on Reddit, Wikipedia, industry publications, and database sites, directly influences what LLMs retrieve and cite.

On-page, LLMO rewards passage-level clarity, semantic structure, and content that answers specific questions completely and concisely.

Tracking LLM visibility requires a different framework than keyword ranking; you measure it by prompts, personas, citation share, and brand sentiment across models.

LLMO and SEO are not competing priorities. The brands winning in AI search are the ones treating them as one integrated strategy.

Large Language Model Optimization (LLMO) is the practice of making your brand, content, and digital presence show up favorably in AI-responses, think Google AI Overviews, ChatGPT, Perplexity, and Claude. Not in the links below the answer. In the answer itself.

That distinction matters more than most teams realize. When an AI cites a source, recommends a brand, or summarizes a solution, users often stop there. They don’t click through five blue links. They act on what the model tells them.

LLMO is how you make sure what the model tells them includes you.

LLMO is the next layer to SEO, one that rewards clarity, authority, and structure over keyword density and domain tricks. And if you’re not thinking about it yet, your competitors probably are.

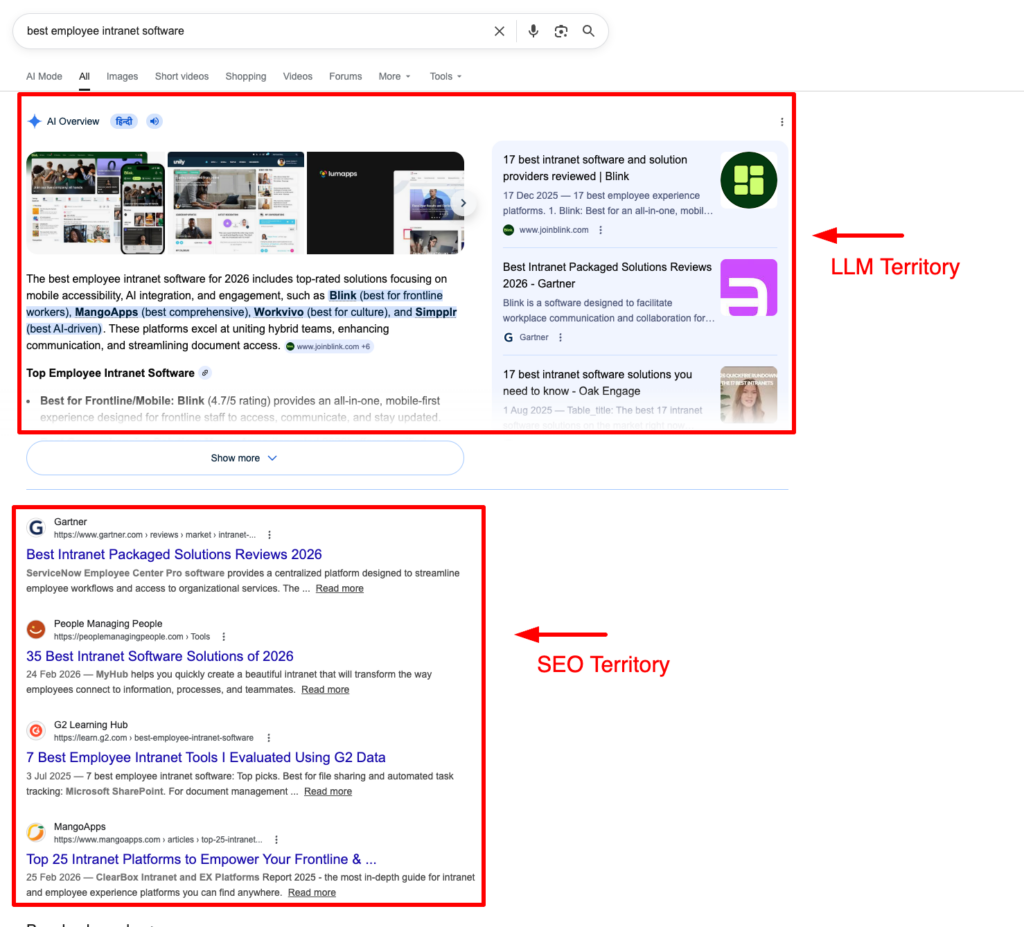

How LLMO Differs from SEO?

SEO and LLMO share the same foundation, authoritative content, technical accessibility, and credible backlinks. But they diverge on what they’re optimizing for.

SEO gets you ranked. LLMO gets you cited. Those are different outcomes, measured differently, achieved differently.

| Traditional SEO | LLMO | |

|---|---|---|

| Goal | Rank on search engine results pages | Appear in AI-generated responses |

| Core signal | Backlinks, domain authority | Brand mentions, semantic authority |

| Content unit | Page | Passage or fragment |

| Discovery method | Keyword matching | Intent and context matching |

| Success metric | Rankings, clicks, CTR | Citation share, brand sentiment, AI visibility |

| Optimization target | Search engine crawlers | LLM retrieval systems (RAG) |

| Content style | Keyword-optimized, comprehensive | Clear, structured, directly answerable |

The mindset shift is this: in SEO, you’re competing for position. In LLMO, you’re competing to be the best answer. That changes how you write, what you publish, and where you build presence outside your own site.LLMO, GEO, AEO, LLM SEO — What’s the Difference?

LLMO Vs GEO Vs AEO Vs LLM SEO

The terminology around AI search optimization is still settling. You’ll see these terms used interchangeably, but they mean different things — and the distinctions matter when you’re deciding where to focus.

LLMO (Large Language Model Optimization) is the broadest of the four. It covers optimizing your brand and content for any LLM — consumer chatbots like ChatGPT and Claude, enterprise AI tools, and generative search experiences. Everything in this post falls under LLMO.

GEO (Generative Engine Optimization) sits within LLMO but narrows the focus to generative search engines specifically — Perplexity, Google AI Overviews, Gemini. The goal is citation: showing up as a named source inside a synthesized response, not just in the links below it.

AEO (Answer Engine Optimization) predates both. It was built around voice search and featured snippets — optimizing for direct, concise answers to specific queries. The structural principles still apply, but AEO alone doesn’t account for the full complexity of LLM retrieval.

LLM SEO is how the SEO community tends to frame the same work, treating LLM visibility as an extension of traditional SERP optimization rather than a separate discipline. It’s the same tactics, different framing.

In practice, the overlap is significant. The content and structural work that earns you citations in AI Overviews is the same work that gets you surfaced in ChatGPT. LLMO is simply the most complete frame for the full opportunity.

Why LLMO Matters Right Now?

The numbers make the case quickly. According to Sundar Pichai, CEO of Alphabet and Google, on the company’s Q2 2025 call: AI Overviews, a Google Search feature available in 200 countries and territories, now has 2 billion monthly users, up from 1.5 billion in May 2025. Conversational AI tools like ChatGPT, Perplexity, and Claude collectively pulled over 600 million unique visitors in May 2025. That’s not a niche behavior anymore. That’s mainstream search.

What makes this urgent isn’t just volume, it’s value. AI search visitors convert at 4.4x the rate of traditional organic visitors. The intent is sharper, the context is richer, and the user is closer to a decision by the time they act. Visibility in AI responses isn’t a vanity metric. It’s a revenue channel.

A good place to start is a quick brand audit. Open ChatGPT, Perplexity, and Google AI Overviews. Search for the top three problems your product solves, not your brand name. See who gets cited. If it’s not you, that’s your gap list.

The window to move early is still open, but not for long. The brands showing up consistently in AI responses today are building a compounding advantage. LLM training data doesn’t reset every quarter. What gets cited now influences how models understand and represent your brand for future versions, too.

That’s why prioritizing your highest-intent pages first makes more sense than trying to optimize everything at once.

Find the five to ten pages closest to a purchase decision and make sure each one answers a specific question completely and clearly, without burying the answer in preamble. Those are the pages LLMs are most likely to retrieve when a user is ready to act.

How LLMs Actually Decide What to Surface?

Most LLMO advice skips this part. You need to understand the mechanism; otherwise, you’re just optimizing blindly.

LLMs generate responses from patterns learned during training, statistical relationships between words, concepts, and entities. But training data goes stale. For anything current pricing, product comparisons, or brand recommendations, a static model isn’t reliable. That’s where Retrieval-Augmented Generation (RAG) comes in.

RAG lets LLMs pull from live external sources before responding. When you ask ChatGPT a question with browsing enabled, it queries Bing, retrieves the most relevant content, and uses that as its grounding layer. Google’s AI Overviews work identically, pulling from Google’s own index.

Two data points worth internalizing. First, ChatGPT primarily cites pages ranking position 21 and beyond about 90% of the time, meaning strong content can surface without top rankings, but only if it’s indexed and crawlable. Second, 44.2% of all LLM citations come from the first 30% of a piece of content. If your answer is in paragraph twelve, the model will cite whoever put it in paragraph two.

The Core LLMO Tactics That Move the Needle

Get Your Brand Mentioned Where LLMs Are Already Looking

LLMs retrieve from sources they trust, and that trust is built through consistent presence on high-authority platforms.

YouTube, Reddit, Quora, and Wikipedia are the most cited sources in Google AI Overviews. If your brand isn’t mentioned naturally in those spaces, you’re invisible to a significant portion of what LLMs retrieve. Engage in relevant subreddits, contribute to Quora threads, and earn coverage in large industry publications not for backlinks, but for mentions.

Database sites matter too. G2, Capterra, and niche-specific directories are frequently pulled by RAG systems when users ask for vendor recommendations. A complete, well-maintained profile is low-effort, high-leverage. And a mention in a Forbes article or analyst report carries far more weight than ten blog posts on your own domain.

Structure Content So LLMs Can Extract It

On your own site, the goal is extractability. LLMs retrieve fragments, not pages so every section needs to stand on its own.

Start with the answer. State the solution in the first two paragraphs. Use clear subheadings, plain language, and avoid passages that only make sense in the context of what came before. ChatGPT is more likely to cite content that uses definite language, high entity density, and simple writing structures.

Schema markup closes the loop. FAQPage, HowTo, and Article schema help AI systems understand your content’s intent without inference. It’s the difference between being interpreted correctly and being skipped for something clearer.

Build Topical Authority Through Smart Internal Linking

LLMs recognize expertise through consistency and coverage across interlinked content, not a single optimized page. This is where semantic internal linking becomes a genuine LLMO lever.

Simpplr used Quattr to build topical content hubs and optimize over 200 pages with structured internal linking, doubling non-brand organic traffic year-over-year and becoming the number one site for organic traffic in the employee intranet software category. Paid reliance dropped from 55% to under 30%.

Kiteworks went further at enterprise scale, replacing over 53,000 static links with a dynamic AI-powered link graph via Quattr’s Autonomous Linking API anchored to real GSC search queries, not keyword guesses. Within eight weeks: 30% more indexed pages, 22% jump in top-3 rankings, and 79% expansion in AI Overview presence. During an industry-wide SERP downturn, linked pages preserved up to 19.9% more sessions than unlinked ones.

Manage What LLMs Learn About Your Brand

Reviews, forum discussions, and third-party coverage all feed into how a model characterizes your brand. Negative sentiment surfaces in AI responses. So does positive sentiment.

Brands that respond to reviews, earn consistent positive coverage, and address criticism systematically are building the training signal that works in their favor long-term. Reputation management today isn’t defensive; it’s an offensive growth strategy.

Your LLMO Quick-Start Checklist

Before you go deep on strategy, run through these seven actions. Each one moves the needle on its own; together, they build the foundation.

- Search your top 10 buying-stage prompts in ChatGPT, Perplexity, and Google AI Overviews. Document who gets cited, how your brand is described when it appears, and where you’re absent entirely. That gap list is your LLMO roadmap.

- Audit your five highest-intent pages. If the core answer isn’t in the first two paragraphs, restructure. LLMs won’t hunt for it they’ll cite whoever made it easy to find.

- Every H2 block should be coherent without context from what came before it. Fragments get cited, not full narratives. If a passage depends on prior reading to make sense, rewrite it.

- FAQPage, HowTo, and Article schema reduce the inference work an LLM has to do. Less guesswork on the model’s end means higher citation probability on yours.

- Check G2, Capterra, Crunchbase, LinkedIn, and any niche directories relevant to your category. Your brand name, description, and positioning should be identical across all of them. Inconsistency creates semantic ambiguity and ambiguity costs you citations.

- Identify your three to four core topic areas and check whether your content is semantically linked across each one. Orphaned pages don’t build authority. Connected, interlinked content does — and LLMs follow the same logic.

- Citation share, AI inclusion rate, brand sentiment across models, these are the metrics that reflect actual LLM visibility. If you’re only tracking rankings and clicks, you’re measuring a shrinking slice of how your audience finds you.

For the full execution framework, including technical checks, structural pitfalls, and entity trust signals, work through the LLM SEO Checklist.

How to Track LLM Visibility?

Ranking reports won’t tell you how you’re doing in AI search. The measurement framework has to change along with the channel.

Start with prompt-based tracking. AI users ask full questions: “what’s the best tool for managing SaaS spend” or “which employee intranet platform is easiest to deploy.” Your visibility measurement needs to mirror that behavior. Identify the prompts that represent real buying conversations across your key topic areas and run them consistently across models, not just Google, but ChatGPT, Perplexity, and Gemini too.

Brand sentiment and positioning in AI responses matter as much as presence. If a model is consistently describing your product as complex or expensive, even without you knowing it, that characterisation is shaping purchase decisions. Catching it early lets you correct it.

This is where Quattr’s Execution led platform becomes the operational layer. Quattr captures results directly from real consumer-facing AI responses, not API simulations, so you see exactly how your brand appears inside AI answers the same way users do. It tracks citations, brand mentions, share of voice, sentiment, and competitor presence across Google AI Overviews, ChatGPT, Claude, Perplexity, and AI Mode. Prompts are based on first-party data, showing who gets cited, which topics competitors dominate, and where your biggest citation opportunities exist. And because it ties AI visibility directly to GA4 and Search Console, you can see how GEO performance translates into actual traffic and conversions, not just impressions.

LLMO Strategy Is Only as Good as the Platform Behind It

Most teams tracking AI visibility are stitching together a search rank tracker, a brand monitoring tool, a content optimization platform, an internal linking solution, and a spreadsheet to hold it all together. The result is fragmented data, delayed action, and no clear line between what you’re seeing and what you should do next.

Quattr is built as a single platform that connects AI visibility tracking to content execution, so the gap between insight and impact closes in days, not quarters.

- Generative Engine Optimization (GEO) — Track your AI Citation Share, brand sentiment, and competitor visibility across ChatGPT, Gemini, Perplexity, and Google AI Overviews in one dashboard

- Content AI — Identify exactly which pages are underperforming in AI search and get actionable recommendations to improve extractability and topical authority. Quattr’s AI SEO agent GIGA then executes at 3x content velocity, creating and optimizing content without the high upfront cost of scaling a team

- Internal Linking AI — Deploy a demand-weighted, self-updating link graph that signals topical depth to both search engines and LLMs at scale

- AI SEO Suite — End-to-end platform connecting keyword research, on-page optimization, and AI search visibility into one integrated workflow

- Growth Concierge — A team of SEO and AEO experts who analyze your data, prioritize opportunities, and help you execute, not just report

Frequently Asked Questions on LLMO

LLMO stands for Large Language Model Optimization. It’s the practice of making your brand and content appear favourably in AI-generated responses, like those in ChatGPT, Google AI Overviews, and Perplexity, rather than just in traditional search rankings.

No, but they’re closely related. SEO gets your content discovered and indexed. LLMO gets it extracted, cited, and surfaced inside AI-generated answers. The brands winning in AI search are treating them as one integrated strategy, not two separate workstreams.

Most AI search tools use Retrieval-Augmented Generation (RAG); they pull from live external sources before generating a response. That means if your content is well-structured, publicly accessible, and mentioned on authoritative platforms, it’s more likely to be retrieved and cited. LLMO is about optimizing for that retrieval layer.