Key Takeaways

- GEO is about getting your content used in AI answers, not just ranking in search

- AI engines prioritize entities, structure, and extractability, not just keywords

- Content must be written to stand alone so it can be pulled into responses

- Topical depth and site structure matter more than individual pages

- Traditional analytics miss this, you need GEO-specific tracking like citation share

- Waiting is a strategy, and it usually means falling behind

Generative Engine Optimization (GEO) is about getting your content used in AI answers, not just listed, but actually pulled into the response.

The difference between being linked to and actually being pulled from sounds minor until you realize it completely rewires how optimization and discover works today.

Google AI Overviews, ChatGPT, Perplexity, Gemini, Copilot, none of them return ten blue links and call it done. They synthesize. They write an answer, attribute it to sources, and move on. A Salesforce study found that 53% of consumers now use AI tools for product research before making a purchase decision. They’re getting answers. If your content isn’t what those answers draw from, you’re not in the consideration set, regardless of where you rank.

Is this stable enough to invest in seriously? Fair question.

Citation sources are volatile, with a month-to-month shift of 40-60% across major AI platforms. But underneath that volatility, patterns hold. The brands showing up consistently share specific characteristics: entity clarity, extractable content structure, and deep topical coverage. These aren’t new SEO tricks with a different name. They’re a different set of signals, optimized for a different retrieval layer.

Generative Engine Optimization is about deliberately building the signals that make AI engines reach for your content when they’re writing an answer in your space.

Everything else in this guide, the tactics, the measurement, the architecture, follows from that.

How Generative Engines Work

Most generative engines today don’t rely purely on training data. They use Retrieval-Augmented Generation (RAG), pulling live content at query time to supplement what the model already knows. Perplexity is almost entirely RAG-based. Google AI Overviews blend training data with live retrieval. ChatGPT’s browsing mode does the same.

At query time, the engine breaks the question into semantic chunks, retrieves relevant content from indexed sources, and hands that content to the language model to synthesize into a response. What gets retrieved, and what gets cited, depends on how well your content matches the query semantically, how clearly it’s structured for extraction, and how trustworthy the source looks to the retrieval layer.

In practice, that means a specific set of signals the engine is looking for.

- How consistently does your content cover a topic?

- How clearly it connects your brand to the right entities, product category, use cases, buyer context?

- How often credible sources reference you? Whether your content is structured so that individual claims are easy to extract and attribute.

Generative engines don’t read your page like a person would. They decide whether your claims are solid enough to put in front of someone.

That’s the question worth keeping in mind every time you make a GEO call.

GEO vs. SEO vs. AEO: What’s Actually Different

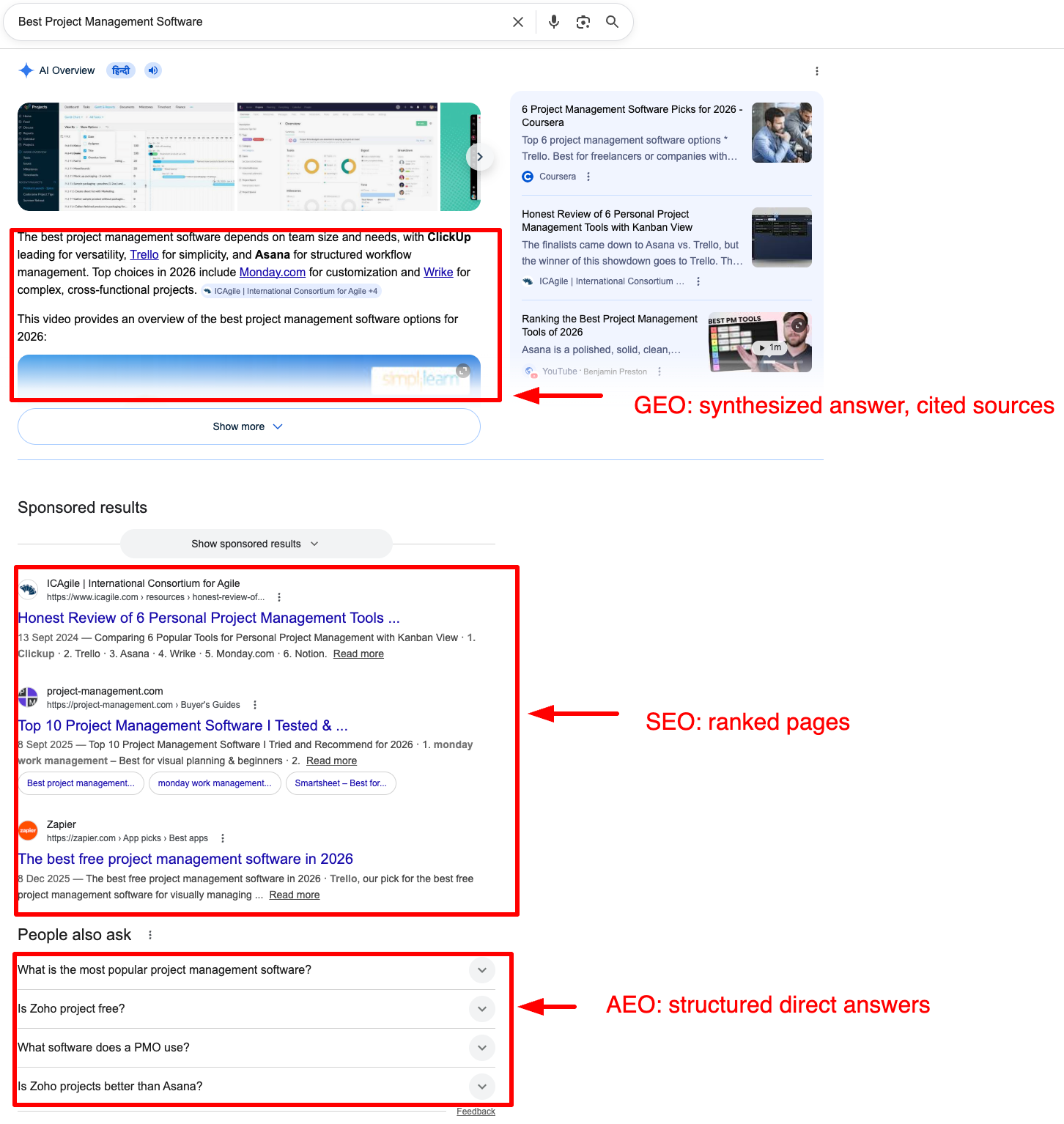

These three disciplines share infrastructure but serve different surfaces.

| Dimension | SEO | AEO | GEO |

|---|---|---|---|

| Target surface | Search result pages | Featured snippets, PAA boxes | AI-generated answers |

| Primary signal | Backlinks, relevance, UX | Structured data, concise answers | Entity authority, topical depth, citations |

| Output | Page ranking | Direct answer placement | Source citation in AI response |

| Measurement | Rankings, organic traffic | Snippet ownership, impression share | AI Citation Share, generative Share of Voice |

| Content format | Long-form, keyword-targeted | FAQ, schema-marked, concise | Authoritative, entity-rich, semantically structured |

The overlap is real; a well-optimized page with strong topical authority and clear structure will pull weight across all three. But they fail differently, and that’s where most teams get caught.

A page can rank in the top three on Google, never capture a featured snippet, and still get cited consistently in AI-generated answers because it has entity depth and a trusted citation footprint. The reverse is equally true: snippet ownership doesn’t translate into GEO visibility if the underlying content lacks topical authority.

Don’t treat these as competing channels where you have to pick one. It’s layered optimization, SEO builds the foundation, AEO captures the structured answer layer, and GEO earns authority in synthesized responses.

Get the architecture right, and these layers compound each other. Get it wrong, and each one will show you exactly where the others are letting you down.

What Generative Engines Use to Decide Who Gets Cited

The signal that holds up best isn’t domain authority or page authority; it’s how thoroughly you actually cover a topic. A site that covers SaaS procurement exhaustively will get cited over a stronger domain that mentions it in passing. Coverage gaps in your topic cluster are citation gaps in AI answers. They’re the same thing.

Entity clarity is where most SEO practitioners underestimate GEO. Generative engines work with entities, brands, products, use cases, buyer contexts, and the relationships between them. If your content doesn’t consistently connect your brand to the right entities, you won’t surface in answers about those topics. The engine isn’t ignoring you on purpose; it just doesn’t have enough consistent signals to know what category you belong in.

Think about it this way. When an AI encounters “Monday” across thousands of web pages, consistent signals across your site, your LinkedIn, your G2 profile, and your documentation are what tell it you’re project management software, not the day of the week. The same disambiguation problem applies to every brand in every category.

Content extractability determines which specific parts of your content get pulled into answers. AI systems don’t read pages. They chunk them, convert those chunks into vectors, and retrieve the most relevant passages at query time. A paragraph that relies on the three paragraphs before it to make sense won’t survive extraction intact. A paragraph that states a clear claim, supports it, and stands alone will.

Inbound citations from credible sources still matter, not because link authority maps cleanly from SEO to GEO, but because citation patterns are how generative engines assess source trustworthiness. A brand that’s referenced consistently across G2, industry publications, Reddit threads, and third-party documentation looks different to a retrieval system than one that only publishes on its own site.

Freshness closes the list. RAG-heavy engines pull live content. A page that hasn’t been touched in six months will lose citation share to a fresher source on fast-moving topics, even if it was once the definitive reference. That’s why maintaining these pages is a high priority.

What you can’t control: what’s already encoded in a model’s training data.

What you can control: entity footprint, content structure, topical depth, citation signals, and freshness. That’s more than enough to make a real, measurable difference.

The Generative Engine Optimisation Playbook

A page answers a query. A source owns a topic. The entire GEO playbook is built around becoming the latter.

Start with prompt mapping

Identify the exact questions users submit to AI engines and trace whether any of your pages could plausibly be cited in response. Run your target prompts through AI platforms and note which responses cite competitors but not you. Those gaps are your content roadmap. Generative engines retrieve by concept, not exact match; your pages need to reflect the full semantic neighborhood of each topic, not just the primary keyword.

Fix architecture before fixing pages

A single well-optimized page won’t earn a consistent citation share. What earns it is a content ecosystem that signals coherent, deep ownership of a subject. When CloudEagle restructured semantic linking across 33 commercial pages, no new content, just architecture, their AI Citation Share tripled in 12 weeks. The content was there. The structure wasn’t doing it justice.

Write for extraction

Every key passage must be self-sufficient. Open with the claim, support it, close the point, all within the same paragraph. Four patterns that consistently improve extractability:

- Definitions written as direct declarative sentences — “X is Y that does Z”

- Comparisons structured as tables rather than vague qualifiers

- Subheadings that name the concept explicitly rather than tease it

- Evidence cited inline — a statistic bound directly to the claim it supports

Match structure to query intent

Informational queries pull from definitional content. Comparative queries favor tables. Commercial queries surface content that names specific use cases and buyer contexts. Match your H2 and H3 structure to the shape of the query; if your page mixes intent types without clear structural separation, citation share drops.

Build topical hubs, not standalone pages

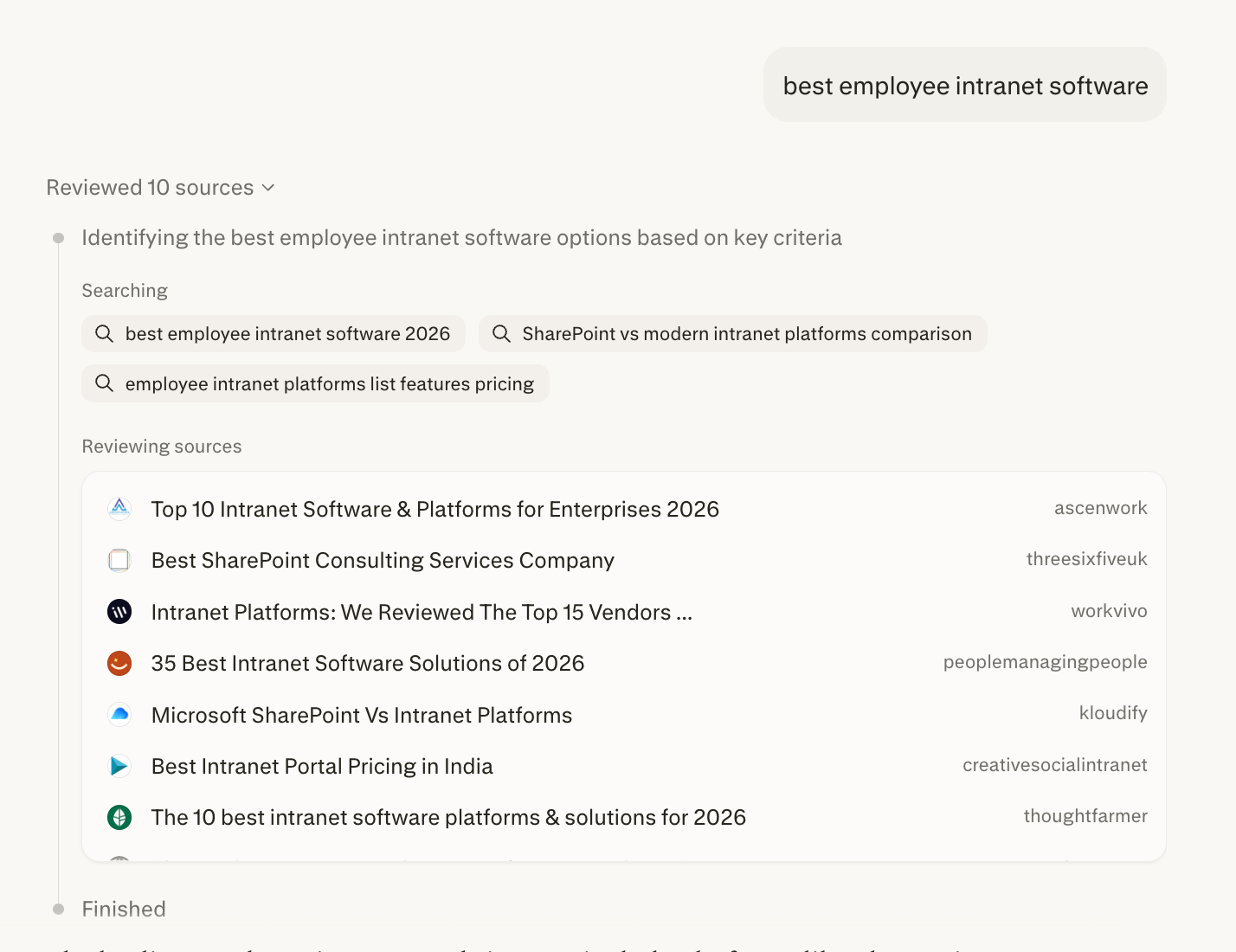

When Simpplr built content hubs across 200+ pages with Quattr, they doubled non-brand organic traffic year-over-year, became #1 in organic traffic in the employee intranet software space, and cut paid search reliance from 55% to under 30%. Topical authority compounds. Standalone pages don’t.

Build GEO into your regular working rhythm

GEO is not a one-time implementation. Build a quarterly review cycle around these five actions:

- Monitor citation share across target queries and flag drops before they compound

- Refresh aging statistics, outdated entity references, and superseded claims

- Expand thin topic clusters where coverage gaps are costing you retrieval relevance

- Update entity associations as your positioning evolves

- Audit uncited passages and rewrite them for extractability

Consistent visibility comes down to having a process you actually run, not a project you revisit when numbers drop.

Measuring Generative Engine Optimization(GEO)

Traditional analytics won’t surface what’s happening in AI-generated answers. Organic traffic tells you what’s converting from traditional search. It tells you nothing about whether you’re being cited in generative responses that never produce a click.

A brand can be the most cited source in ChatGPT for its category and show zero activity in GA4. That’s the gap GEO punches in your reporting, and why your standard analytics dashboard won’t show you what’s going on.

The metrics that matter:

- AI Citation Share — how often your brand is cited across AI-generated answers for your target queries. The GEO equivalent of keyword rankings.

- Generative Share of Voice — your citation presence relative to competitors across a defined query set. Not just whether you’re visible, but whether you’re dominant.

- AI Sentiment — how generative engines characterize your brand when they cite you. A citation that frames you poorly can do more damage than no citation at all.

- Prompt-to-page coverage — how many of your target prompts have corresponding content that could plausibly be cited. Gaps here are direct GEO opportunities.

- Context tracking — which topics and prompts trigger your brand mentions. This tells you what you own versus where you’re invisible.

The attribution path in AI search is harder to trace than traditional SEO. A user gets an AI answer, doesn’t click, then searches your brand name three days later and converts. Your analytics connect it to branded search. The AI mention that started it never shows up.

That’s not a reason to skip measuring GEO, it’s a reason to set up the right tracking now, before the gap turns into a harder conversation with your stakeholders.

Where Generative Engine Optimization Goes Wrong

The mistakes people make in GEO are mostly the same instincts they built up in SEO, just applied somewhere those instincts don’t hold.

When visibility drops, the instinct is to publish more. That’s worked in traditional SEO but in GEO you’re mostly handing retrieval systems a bigger pile of thin content to skip over. Thin content at scale gives retrieval systems more to ignore, not more to cite.

Optimizing for keywords instead of entities: A page stuffed with target keywords but vague on category, use cases, and buyer context won’t earn citation share. Retrieval systems don’t match keywords. They match entities and the relationships between them.

GEO rewards site-level structure. A single well-written page inside a flat, loosely connected site will lose to a decent page inside a well-structured topic cluster. The page isn’t the unit. The cluster is.

Treating your website as the whole strategy. AI systems pull from G2, Reddit, LinkedIn, and industry publications. Strong site content with a thin third-party presence looks less credible to a retrieval system than a brand with consistent external signals. Both sides of that equation need work.

Trusting your existing dashboard to tell you the truth: Brands are losing citation share right now with nothing unusual showing up in their analytics. Absence of a signal isn’t absence of a problem. It’s a blind spot.

Waiting for things to settle: They won’t, not in a way that rewards waiting. The teams doing this work now are building up a lead that gets genuinely harder to close over time. The ones waiting are ceding ground that gets harder to reclaim every month.

GEO Without the Guesswork with Quattr

Most teams already know what needs to happen. The problem is five tools that don’t talk to each other and a workflow that falls apart between them.

Quattr brings the full workflow into one place, so your team can find the gaps, execute the fixes, and track what moves citation share without switching tabs.

- AI Citation Share Tracking — Monitor how often and where your content gets cited across AI search environments

- AI Sentiment Monitoring — Track how generative engines characterize your brand, not just whether they mention it

- E-E-A-T Content Audits — Surface trust, expertise, and experience gaps across your entire page inventory

- Topical Authority Mapping — Find coverage gaps and structural weaknesses before they cost you citation share

- Semantic Internal Linking — Build entity relationships across your content that signal topical depth to retrieval systems

- Schema & Entity Optimization — Make your expertise and authorship signals machine-readable

- Content Prioritization Engine — Focus on pages with the highest visibility upside, not just the easiest fixes

If you want to see how this works against your actual content inventory, book a demo.

FAQs on Generative Engine Optimization

No. Traditional search still drives significant traffic, and that won’t change overnight. GEO adds a layer of citations in AI-generated answers that influence decisions before a user ever clicks. Brands that treat them as competing priorities will underperform on both.

Structural changes move faster than most practitioners expect. CloudEagle tripled AI Citation Share in 12 weeks through content restructuring alone, no new pages. Authority-building takes longer, but architectural fixes surface quickly in generative results.

Indirectly. Inbound citations from credible sources influence how retrieval systems assess trustworthiness. But the direct link authority mechanics of SEO don’t map cleanly onto GEO. Third-party presence, reviews, mentions, and community discussions carry weight that pure backlink counts don’t capture.