Key Takeaways

- AI engines like ChatGPT, Google AI Overviews, Gemini, and Perplexity select a tiny set of “authoritative” sources to answer queries directly, bypassing clicks and costing brands massive traffic.

- Traditional SEO tools lag behind; Quattr’s AI Visibility Dashboard tracks real-time citation rates, Share of Voice, and sentiment across all major AI engines in one view.

- Content Idea Agent builds complete strategies from GSC data plus AI surfaces, auto-clustering intent, and mapping coverage gaps across 8 platforms.

- GIGA predicts citability before publishing, generates on-brand images and landing pages in one session, and automates internal linking for topical authority.

- Technical Health ensures AI crawlability, treating Core Web Vitals as a must for selection; content alone isn’t enough without it.

- Quattr compounds measurement, strategy, creation, and deployment into one workflow, turning AI visibility from reactive monitoring to proactive wins.

When ChatGPT answers “best CRM for startups,” it cites three brands. If yours isn’t one of them, 40,000 monthly searches disappear before anyone clicks. AI-sourced traffic converts at 2.4x the rate of traditional organic search, so missing these citations directly impacts the pipeline. Most teams don’t know which three brands were chosen, or why theirs was excluded.

Across Google AI Overviews, ChatGPT, Gemini, and Perplexity, large language models now resolve user queries before a click happens. These systems don’t retrieve pages based on keywords. They answer from a small set of sources they treat as authoritative, current, and structurally reliable.

That shift is changing how enterprise teams evaluate SEO platforms. In the G2 Spring 2026 Report, Quattr ranked #1 for Multi-step Planning and Autonomous Task Execution, reflecting a broader market move toward AI-native search visibility platforms built for execution, not just reporting.

Traditional tools show you where you’re losing. Quattr changes the outcome. Each section below covers a specific Quattr capability and how it directly affects AI selection.

1. AI Visibility Dashboard: Measuring How AI Engines Actually Select Your Brand

Most teams discover AI visibility loss through trailing indicators: traffic dips, lower CTR, and competitors appearing in summaries they don’t control. By then, the selection logic of AI search platforms has already shifted.

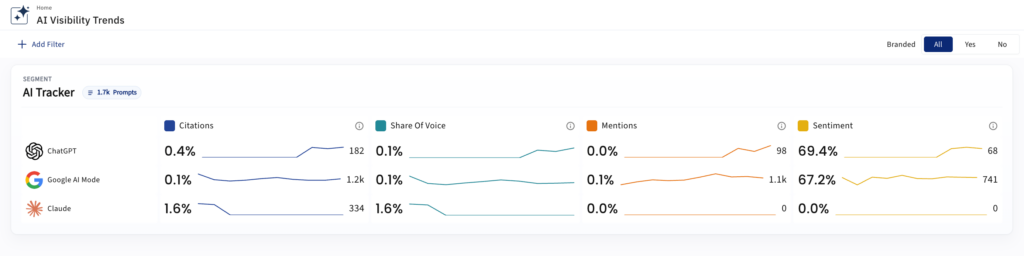

The AI Visibility Dashboard is Quattr’s executive-level interface for tracking brand presence across AI answer engines simultaneously, ChatGPT, Google AI Mode, Gemini, Perplexity, and Claude, in a single session, with faster metric loading than any model-by-model reporting workflow.

The dashboard opens on a snapshot view covering all tracked models. Each row displays the Citation rate, Share of Voice, Mentions, and Sentiment, alongside sparkline trendlines. If multiple trackers are configured, by product line, region, or keyword segment, each loads independently.

What the dashboard tracks at the citation layer:

- How often your domain is cited as a source in AI-generated answers, per engine

- Share of Voice: a weighted combination of Mentions and Citations, with Citations carrying a higher weight, the correct metric for competitive benchmarking with executive stakeholders

- Prompt-level traceability showing where each citation originates

- Which competitors have gained or lost visibility over the active period

- Sentiment framing inside AI responses: positive, neutral, negative, tracked over time

Branded vs. Non-Branded tracking: The toggle between these two modes is one of the most strategically significant controls in the interface. Non-Branded is the default; it measures how well content is cited in response to category-level queries a prospect would ask without knowing the brand by name. Branded tracking surfaces how AI engines describe the brand when it’s named directly, which is the correct view for reputation monitoring and brand safety.

Trend View and Drill-Down View: The Trend View supports period-over-period comparison at daily, weekly, monthly, or quarterly granularity, with delta reporting showing total citation change and percentage shift. That comparison state carries forward into all drill-downs opened in the same session. The Drill-Down View filters citation and Share of Voice data by competitor, prompt, cited URL, intent grouping, and geography, enabling direct attribution of citation performance to specific pages and query clusters.

Advanced Exploration: The Explore Trends control opens two deeper pathways: customizing the Looker view to add metrics and save to existing dashboards, or opening a full comparative trend view for the quarter with all active filters preserved.

AI selection compounds. Brands that accumulate consistent citation signals across a topic become the default source over time. The AI Visibility Dashboard makes that accumulation measurable, and connects directly to the Quattr workflows that close the gaps it surfaces.

2. Topic Intelligence: Understanding What AI Is Being Asked

AI systems are not prompted with keywords. They receive full-context questions that bundle intent, constraints, and expected outcomes into a single request. Teams still planning content around keyword lists are missing the upstream signal that determines whether AI systems consider their content at all.

Topic Intelligence aggregates individual AI prompts into structured topics that reflect how demand clusters in LLMs, identifies recurring question patterns across a topic area, and maps the breadth of prompts tied to the same underlying informational need. This shifts planning from one-off prompt tracking to topic-level understanding, which is how LLMs generalize and retrieve information.

Quattr Wins Enterprise Trust

Recognized by verified enterprise users on G2.com

3. Content Idea Agent: Building a Content Strategy from Full Demand Signal

Most enterprise content strategies start from a GSC export that covers a fraction of actual search demand. Intent is tagged manually, which means it drifts across contributors and quarters. Three related queries receive three separate briefs instead of a single authoritative page. Competitive analysis runs against Classic Google only, which misses the surfaces where AI citation share is actually won.

The Content Idea Agent fixes this at the source.

- It ingests GSC-connected keyword data, real clicks and impressions, not modeled estimates, and applies intent clustering using your existing taxonomy consistently across the full keyword universe.

- Competitive coverage is mapped across 8 platforms: Classic Google, Google AI Mode, Google AI Overview, ChatGPT, Perplexity, Gemini, Claude, and Bing.

- The Coverage Planner organizes output into Themes and Topics with importance-weighted Coverage Scores.

- The Strategize layer identifies the strongest pillar anchor, calculates the page budget to reach a target Coverage Score, maps internal linking across all proposed pages, and auto-selects page types from SERP distribution patterns.

- Accepted plans route directly to GIGA for generation, no re-tagging, no handoff delay.

AI systems evaluate topical authority across a domain’s full content architecture. A strategy built from a narrow export produces fragmented coverage, which is exactly what prevents AI systems from treating a domain as a reliable source on a given subject.

4. Content AI & GIGA: Predicting and Executing AI-Citable Content

Publishing more content does not improve AI citation rates. AI systems favor content that is structurally legible, semantically complete, and directly aligned with how answers are assembled. Most teams discover the misalignment after publishing, when AI visibility fails to materialize. GIGA shifts that feedback loop to before publication.

Before publishing, GIGA evaluates topical coverage relative to what AI-selected sources cover on the same subject, entity presence and clarity based on how LLMs construct answers, and structural signals including header logic, answer-first formatting, and section coherence, content updated within the last 30 days receives 3.2 times more citations than older material. The output is a predictive citability signal, not a generic quality score, indicating whether a piece is likely to be selected when AI systems construct answers for that topic.

In execution, GIGA produces high-intent content briefs grounded in topic intelligence, reformats headers into question-aligned structures AI systems favor, and applies clarity improvements that reduce ambiguity in AI interpretation. For existing content, GIGA re-evaluates pages against current AI selection signals and flags where targeted refreshes are needed, not full rewrites.

5. Page Draft Image Generation: On-Brand Visuals Inside the Editor

AI citation readiness includes relevant, on-brand visuals. Sourcing images outside the content workflow adds handoff delay to the same publishing cycle that determines whether a page competes for AI selection.

Quattr’s Generate Image feature is built into the Page Draft editor and the Guided Editor. Images are generated from a topic input and optional contextual instructions, with aspect ratio and brand color controls, then reviewed, alt-tagged, and inserted without leaving the platform. Alt text is editable before insertion. If no generated option fits, regeneration produces a new set.

6. GIGA Landing Page Generator: From Keyword to Live Page in One Session

The keyword gap is identified. The brief is written. The page still isn’t live. That gap compounds across enterprise content programs because the path from a validated keyword to a published URL runs through content, design, and engineering in separate sessions, while AI systems lock in their reference pages from whoever is already there.

Quattr’s Landing Page Generator compresses the entire path into a single supervised session. It is the only landing page builder natively designed for Generative Engine Optimization, structured around what AI surfaces and turns your PPC keywords into publish ready landing pages in minutes.

- The workflow runs across five stages: keyword confirmation from live demand signals, multi-surface analysis across 8 traffic sources (Classic Google, Google AI Mode, Google AI Overview, ChatGPT, Perplexity, Gemini, Claude, and Bing).

- Page structure scaffolded from the Section DNA of a reference URL with an independent Saved Design System governing brand style, a grounded research brief with 8 human-approved inputs, and single-pass page generation powered by Claude Opus 4.6 and latest AI models.

The structural and retrieval decisions, which surface to optimize against, which sections to include, which architecture signals to build from, happen in stages 2 and 3, before content is written. Standard landing page builders don’t operate this way. A page citation-ready for ChatGPT or Gemini requires different structural decisions than a page optimized for a traditional blue-link result, and those decisions can’t be retrofitted after generation.

7. Automated Internal Linking: Building Topical Authority for AI Systems

AI systems don’t evaluate pages in isolation. They evaluate domains as knowledge graphs and favor sites that demonstrate coherent coverage across a topic area with clear relationships between foundational and supporting pages. Fragmented internal linking weakens that signal, even when individual pages are well-written.

Quattr’s NLP-driven analysis identifies what each page is substantively about, how pages relate semantically across a topic cluster, and which pages function as pillars versus supporting coverage. Link prioritization is weighted by demand signals, semantic proximity, and authority flow, directing internal link equity from established pages to underserved clusters. All patterns are reviewed in a sandbox environment before going live, with controls over anchor text usage and insertion frequency.

For Cloudeagle, without publishing a single new page, Quattr restructured 33 commercial pages, optimizing semantic internal linking and content architecture, and achieved 113% organic click growth alongside a 3x increase in AI Citation Share in 12 weeks. The content was already there. The structure was the problem.

8. Technical Health & AI Crawlability: Ensuring AI Systems Can Parse Your Site

Quattr evaluates whether critical content is accessible to modern crawlers, whether the site structure supports clean parsing of main content, and whether internal relationships reinforce or confuse page intent. AI systems rely more heavily on clean content extraction, consistent structural patterns, and predictable internal relationships than standard search crawlers do.

Core Web Vitals are treated as a selection constraint, not a separate audit track. Pages that are slow, unstable, or resource-heavy are less likely to be treated as dependable sources when AI systems choose from a limited candidate set.

How the full platform compounds:

- AI Visibility Dashboard identifies where citation gaps and competitive shifts are occurring across all tracked engines

- Topic Intelligence identifies where to focus within the demand landscape

- Content Idea Agent builds the strategy from the full demand signal

- Content AI and GIGA qualify and improve content before it is published

- Page Draft Image Generation produces on-brand visuals inside the same workflow

- GIGA Landing Page Generator closes the gap from keyword to live page in one session

- Automated Internal Linking reinforces topical authority at scale

- Technical Health ensures AI crawlability and eligibility

- Execution ensures every improvement reaches the live site where it counts

Without this layer, AI visibility work stays theoretical.

Why Quattr vs. Just Dashboards

Most SEO and AI visibility platforms end at visualization, aggregating signals, surfacing trends, and reporting what has already happened. AI systems select sources upstream, often before a click occurs. By the time visibility loss shows up in traffic data, the underlying selection logic has already shifted.

What Quattr connects into one system: AI visibility measurement across ChatGPT, Google AI Overviews, and Perplexity; topic intelligence and content strategy architecture; content qualification and predictive citability scoring; on-brand visual generation inside the content editor; internal linking reinforcement at scale; technical eligibility monitoring; and direct deployment to the live site.

This isn’t a replacement for SEO fundamentals. It’s how SEO fundamentals are applied when AI systems sit between users and results, and when the work is to shape what AI chooses, not just monitor what it does.

From Visibility to Selection with Quattr

Quattr is built for teams that need to understand why AI systems select certain sources, where their visibility breaks down, and what specific changes will materially improve selection outcomes.

If you want to evaluate how your brand currently appears in AI-generated answers and identify execution gaps, book a demo to see your baseline.

FAQs on Quattr’s AI Visibility and Analytics Capabilities

Quattr goes beyond monitoring traffic drops; it measures real AI citations, Share of Voice, and predictive citability across ChatGPT, Gemini, and more, then executes fixes like GIGA content and linking in one platform.

Use the toggle: Non-branded (default) tracks category queries for prospect capture; branded monitors reputation when your name is mentioned directly.

Yes, the GIGA Landing Page Generator handles keyword analysis, structure, research, and single-pass generation using Claude Opus 4.6 or any of the latest AI models straight to your live site in minutes.

Yes. Quattr’s GIGA Landing Page Generator takes validated keywords, analyzes AI and search demand across multiple surfaces, builds page structure, generates content, and publishes AI-optimized landing pages in a single workflow.

Yes. AI systems depend heavily on crawlability, clean structure, Core Web Vitals, and consistent page relationships. Even strong content can fail to appear in AI-generated answers if technical signals reduce trust or accessibility.