Key Takeaways

- AI search doesn’t rank pages, it retrieves and cites sources. Winning means becoming content AI systems can clearly extract, trust, and reference.

- Citability, topical authority, and semantic structure are the three levers that determine whether your content shows up in AI-generated answers.

- Traditional SEO metrics miss the answer layer, Share of Answers, citations, and query coverage are the new signals that matter.

- Most visibility gaps aren’t content problems but structure and clarity issues, fixing internal linking, content architecture, and direct answerability drives the fastest gains.

Your brand showed up in a ChatGPT answer yesterday. Or it didn’t, and your competitor did. There’s no way to know which one happened. That’s the problem.

Traditional metrics won’t catch any of it. SEO tools don’t track what ChatGPT said about your brand at 11pm to a prospect mid-buying decision. That gap isn’t a future concern. It’s already affecting the pipeline, and most teams have no instrumentation for it.

The brands winning in AI search aren’t the ones with the largest content budgets. They’re the ones who figured out what to measure first. That’s where the right tooling matters.

What Is AI Visibility and Why Does It Matter?

AI visibility is how often, and how favorably, your brand gets mentioned, cited, or recommended in AI-generated answers across platforms like ChatGPT, Google AI Mode, Perplexity, Gemini, and Claude.

This is different from traditional SEO in one critical way: you’re not trying to rank on a SERP. You’re trying to become the source an AI engine trusts when generating its answer. That shifts the KPIs entirely; citations, brand mentions, share of voice, and sentiment inside AI responses are what actually matter here.

The stakes are higher than most teams have priced in. AI-powered search is already shaping purchase decisions at scale. The brands earning consistent citations today are compounding authority in a layer that rank tracking doesn’t touch and can’t measure.

How AI Search Actually Retrieves and Cites Content

Traditional search is a matching problem. A crawler indexes your page, an algorithm scores it against a query, and a ranked list comes out. The signals are well-documented, relevance, authority, technical health, and the optimization playbook has been refined over two decades.

AI search is a retrieval and synthesis problem. The mechanism is different enough that the old playbook only partially applies.

When a user submits a query to an AI-powered search system, the model doesn’t scan a ranked index. It retrieves chunks of content it has determined are semantically relevant to the query, evaluates their credibility and coherence, and constructs an answer, often citing the sources it drew from. That retrieval decision is made at the content level, not the domain level. A well-structured, semantically rich page on a mid-authority domain can outperform a thin page on a high-authority one.

What the Retrieval Layer Actually Evaluates

Three things govern whether your content gets pulled into an AI-generated answer:

Semantic clarity — Can the model extract a clean, unambiguous answer from your content? Pages that bury the lead, over-qualify every claim, or use vague categorical language are harder to parse and less likely to be cited. The model is looking for content it can lift and trust.

Topical depth and coherence — AI systems favor sources that demonstrate a comprehensive understanding of a subject, not just keyword coverage. A page that thoroughly answers the primary question and anticipates the adjacent ones, supported by a well-structured content cluster around it — signals authority in a way a single optimized page can’t.

Structural legibility — Heading hierarchy, internal linking, schema markup, and clean HTML aren’t just crawlability hygiene anymore. They’re the structural signals that help AI systems understand what a page is about, how it relates to other content on your site, and whether it’s a reliable source to cite. This is where “good enough” technical SEO stops being good enough.

Why This Changes the Optimization Target

The implication for how you build and structure content is concrete. You’re no longer optimizing purely for a ranking algorithm that rewards inbound authority signals. You’re optimizing for a retrieval system that rewards content it can parse, trust, and cite.

That means internal linking architecture matters more than most teams currently treat it. It means content refreshes targeting semantic gaps, not just keyword gaps, move the needle. And it means thin pages that rank through domain authority alone are liabilities in the answer layer, even when they hold their position in traditional SERPs.

The Three Pillars of AI Search Visibility

If Section 3 explains how retrieval works mechanically, this is what you actually optimize against. Quattr’s framework for AI Search Visibility rests on three pillars, not as a taxonomy, but as an execution checklist. Each one has a direct operational implication.

1. Citability

Citability is whether your content can be extracted and trusted by an AI system constructing an answer. It’s the most underappreciated pillar because it lives at the writing and structure level, not the strategy level, and most SEO audits don’t touch it.

Content that gets cited tends to share a few characteristics: it states its position clearly and early, it uses precise language rather than hedged generalities, and it’s structured so a specific passage can stand alone as an answer. Content that doesn’t get cited is often technically sound but written to rank, not to be referenced, dense with keyword variation, light on direct assertion.

Improving citability often means editing, not publishing. Restructuring how a page leads, tightening its claims, and ensuring each section answers a discrete question before expanding on it. Small changes with measurable impact on Share of Answers.

2. Topical Authority

Topical authority in the context of AI search isn’t just about content volume, it’s about coherence. An AI system evaluating whether to cite your content on a subject is also implicitly evaluating the ecosystem of content around it. A single well-optimized page sitting in a sparse content cluster is a weaker citation candidate than an equally good page embedded in a tight, well-linked topical hub.

This is where content architecture becomes a competitive lever. Teams that have built genuine depth, comprehensive coverage of a topic space, with clean internal linking connecting related pages, have a structural advantage in the answer layer that’s hard to replicate quickly.

Simpplr built exactly this. By optimizing over 200 pages and consolidating content into topical hubs using Quattr, they became the number one organic result in the employee intranet software category, doubling non-brand organic traffic year over year and reducing paid dependency from 55% to under 30%. The rankings followed the architecture, not the other way around.

3. Semantic Structure

Semantic structure is the connective tissue between the first two pillars. It’s how you signal to an AI retrieval system what your content is about, how it relates to adjacent content, and why it should be trusted.

In practice, this means a heading hierarchy that reflects genuine information architecture — not just keyword placement. It means internal links that follow topical logic rather than PageRank distribution. It means schema markup that makes entity relationships explicit rather than implicit. And it means a site structure where a model crawling your domain can construct an accurate map of your expertise.

CloudEagle demonstrates what semantic structure work actually delivers. Without publishing a single new page, Quattr restructured 33 commercial pages, optimizing semantic internal linking and content architecture, and achieved 113% organic click growth alongside a 3x increase in AI Citation Share in 12 weeks. The content was already there. The structure was the problem.

These three pillars aren’t independent workstreams. Citability without topical authority produces pages that could be cited but aren’t trusted enough to be. Topical authority without semantic structure produces depth that AI systems can’t navigate. The compounding effect comes from working all three together, which is where most teams currently have the largest execution gap.

How to Measure AI Search Visibility

Measuring AI Search Visibility starts with accepting that your current stack wasn’t built for it. Rank trackers, GSC, GA4, they tell you what happened after the click. They don’t tell you what’s happening in the answer layer before it.

The core metric to track is Share of Answers: across the queries that matter to your business, how often does your content appear as a cited source in AI-generated responses. Not impressions. Not mentions. Actual citations in answers your buyers are receiving.

Beyond that, you’re looking at three supporting signals:

- Citation velocity — is your Share of Answers growing as you make structural changes?

- Query coverage — which topics are you being cited for, and where are the gaps relative to your content footprint?

- Citation-to-click conversion — of the AI answers citing you, how many are driving measurable traffic back to your site via Search Console?

The measurement infrastructure matters because AI Search Visibility without a feedback loop is just guesswork. You need to know which content changes are moving Share of Answers, and which aren’t, to run an efficient optimization cycle.

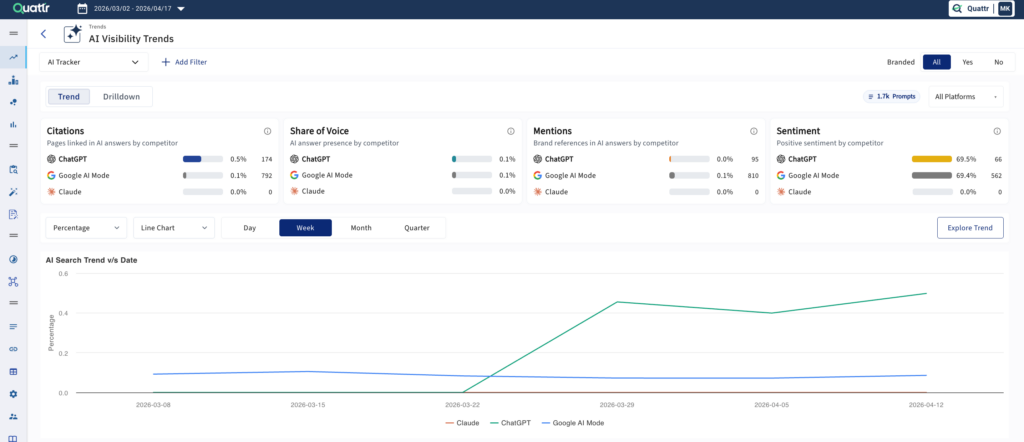

Quattr’s AI visibility tracking showed us the full picture across Google AI Overviews, ChatGPT, Perplexity, Gemini, and Claude. But what really got my attention was the sentiment analysis. It doesn’t just tell us whether a competitor was cited, it shows how AI models characterize our brand versus theirs.

What Kills AI Visibility And How to Fix It

Most AI visibility problems aren’t content problems. There are structural and governance problems that content is sitting on top of. The symptom is low Share of Answers. The cause is almost always one of the following.

Thin Pages Holding Rankings They Haven’t Earned

Domain authority carries pages in traditional search that have no business ranking. In the answer layer, that scaffolding doesn’t exist. An AI retrieval system evaluating a 400-word page that broadly covers a topic but answers nothing precisely will skip it, regardless of how many links point to the domain.

The fix isn’t always new content. It’s interrogating what the page actually answers and whether it answers it well enough to be cited. Often, consolidating two thin pages into one coherent, well-structured piece does more for Share of Answers than publishing three new ones.

Broken Topical Architecture

Gaps in your content cluster are visible to AI retrieval systems in a way they never were to ranking algorithms. If your hub page on a topic links out to four subtopics but is missing two that users commonly ask about, the model registers incomplete coverage and defaults to a more comprehensive source.

Kiteworks faced this at scale. They needed to grow qualified organic traffic without sacrificing content quality or execution speed. Using Quattr’s AI-driven workflows, they increased high-quality content production by 2.5x monthly, filling topical gaps systematically rather than opportunistically. The result was 300% growth in non-brand traffic over 19 months. The volume wasn’t the strategy; the coverage coherence was.

Poor Internal Linking Logic

Internal linking is how you tell a retrieval system which pages are authoritative on which topics. Most sites link for navigation or PageRank distribution. Neither maps well to topical authority signals that AI systems use.

Links should follow content relationships, a page on a subtopic should link to the hub, related subtopics should cross-link where the subject matter genuinely overlaps, and commercial pages should be anchored into the informational architecture around them. When that logic is missing, even strong content sits in isolation.

Content Written to Rank, Not to Answer

Keyword-optimized content that hedges, qualifies, and circles its subject without landing a clear answer is the most common AI visibility killer, and the hardest one for teams to self-diagnose because the content looks fine by traditional standards.

If a model can’t extract a clean, trustworthy answer from a passage, it won’t cite it. Fixing this means editing with a different question in mind: not “does this page cover the keyword?” but “can someone, or something, lift a direct answer from this page and stand behind it?”

That’s a content quality bar most SEO-optimized writing doesn’t currently meet. Closing that gap is where AI visibility work gets granular, and where it compounds fastest.

AI Visibility vs. Traditional SEO: What Changes, What Doesn’t

The framing of AI search as a replacement for traditional SEO creates a false choice that leads teams to either ignore it entirely or over-rotate toward it. Neither is the right move.

The more accurate picture: the fundamentals haven’t changed, but their relative weight has, and a few things that were optional are now load-bearing.

| Aspect | Traditional SEO | AI Search Visibility |

|---|---|---|

| Primary signal | Domain authority + keyword relevance | Semantic structure + topical depth |

| Optimization target | Ranking position | Citability + Share of Answers |

| Content goal | Coverage + keyword match | Precision + direct answerability |

| Internal linking | PageRank distribution | Topical authority mapping |

| Technical SEO | Crawlability + indexation | Rendering + structured data + speed |

| Measurement | Rankings, impressions, CTR | Share of Answers, citation velocity |

| Content gaps | Keyword opportunities | Topical coverage coherence |

What stays the same: technical health, content quality, and genuine topical expertise still matter; they’re just evaluated differently. A fast, well-structured site with deep subject matter coverage is the foundation for both.

What changes is the execution-first cycle. Traditional SEO optimizes toward a ranking. AI visibility optimizes toward an answer. That means content decisions need to be evaluated against citability, not just keyword fit. It means internal linking needs to follow topical logic, not just link equity. And it means measurement needs a feedback loop that goes beyond rank movement.

The teams that will compound fastest aren’t the ones who abandon their SEO programs for AEO. They’re the ones who extend their existing program’s instrumentation and execution to cover the answer layer, treating Share of Answers as a parallel KPI, not a replacement one.

Building an AI Visibility Strategy: The Execution-First Approach

Most AI visibility strategies stall at the audit. Teams identify gaps, produce recommendations, and then run into the same execution bottleneck that slows traditional SEO programs: too many pages, too few resources, and no clear prioritization logic.

The execution-first approach inverts that. Instead of optimizing for coverage and hoping citations follow, you start with the pages closest to conversion, identify exactly what’s suppressing their citability, and deploy fixes in a closed loop, structural changes, content edits, internal linking updates, measured against Share of Answers movement, not just rank shifts.

The sequencing matters:

- Audit for citability gaps — not just keyword gaps. Where is your content failing to deliver a direct, extractable answer?

- Fix architecture before publishing — broken topical structure and weak internal linking will suppress new content just as reliably as old content.

- Measure at the answer layer — track Share of Answers alongside traditional KPIs so you know which changes are actually moving the needle.

- Iterate by topic cluster — not by individual page. AI visibility compounds at the cluster level.

Stop Measuring Visibility, You Can’t Act On

Most enterprise SEO programs are running on fragmented tooling, one platform for rankings, another for audits, another for content, none of them talking to each other. The result is recommendations that don’t ship, optimizations that don’t compound, and reporting that can’t explain what’s actually moving revenue.

Quattr closes that loop. It’s an AI-first SEO and AEO platform that connects measurement to execution, tracking your Share of Answers and traditional organic performance in one place, then giving you the workflows to act on what you find.

- AI Citation Share Tracking — Monitor how often your content is cited in AI-generated answers across your topic space, tracked alongside traditional rank and traffic data.

- E-E-A-T Intelligence — Analyze how AI systems interpret your brand’s expertise, authority, and trustworthiness across queries, including sentiment, positioning, and competitive perception inside generated answers.

- Content Optimization Workflows — Identify and fix citability gaps, semantic structure issues, and topical coverage holes without switching between tools.

- Autonomous Internal Linking — Map and deploy internal linking that follows topical logic, not just link equity, the single highest-leverage structural change for AI visibility.

- AI-Driven Content Production — Scale content output without sacrificing quality, using workflows that maintain topical coherence across your entire cluster.

- SEO Expert Concierge — Dedicated strategic support that translates platform insights into prioritized execution, not just recommendations.

- Search Console + GA4 Integration — Connect visibility changes directly to clicks, conversions, and revenue so every optimization has a measurable business outcome.